|

We use our eye gaze to communicate and coordinate with other humans. We also rely on the gaze information provided by those we interact with to help us understand their visual and mental perspective.

Aside from helping us navigate our dynamic interactions with others, the early development of these gaze processing abilities is also pivotal in the later development of language, and social learning. However, it is also well established that these abilities develop differently in autism and schizophrenia. My research aims to understand how the brain supports gaze-processing during interactions, both in typical development, autism and schizophrenia. |

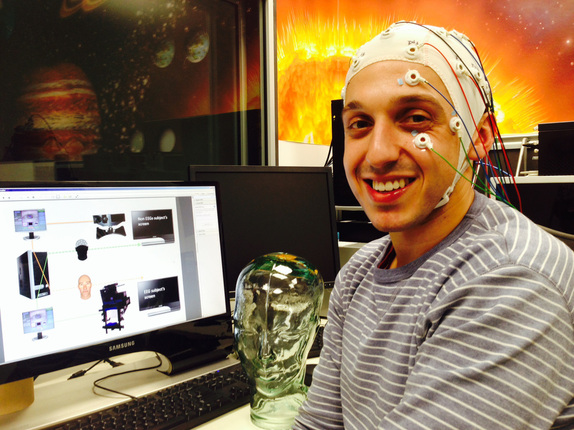

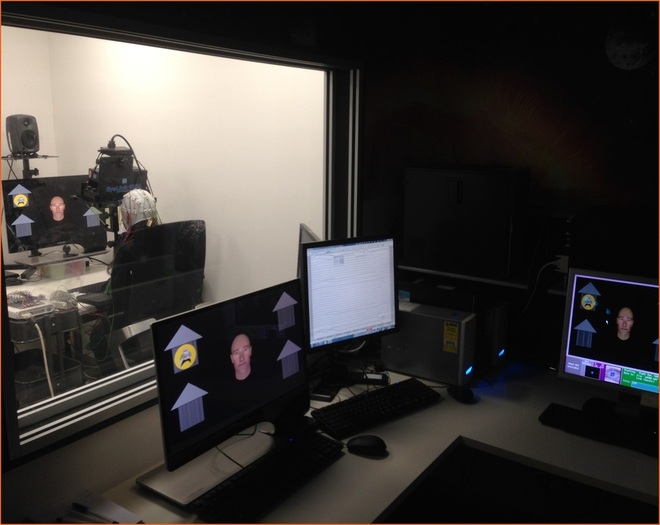

To achieve this, I have been developing virtual reality paradigms to simulate (seemingly) genuine interactions in laboratory settings. This allows us to measure the behavioural and neural effects associated with gaze-based communication during ecologically valid interactions, whilst maintaining full experimental control.

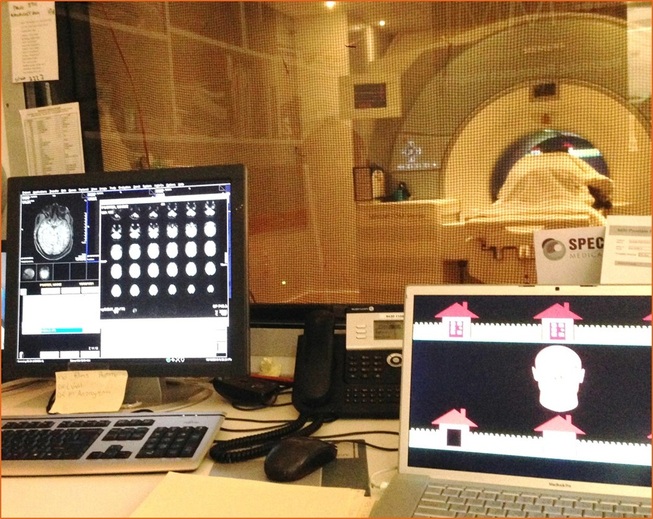

Typically, I have participants interact with a virtual character on a screen to complete cooperative tasks. Participants believe that the virtual character is controlled by another human via live infrared eye-tracking, and that they are controlling an avatar that their partner can see in the same way.

In reality, the virtual character's gaze behaviour is controlled by a gaze-contingent algorithm that uses the online recordings of the participant's eye movements to program contingent responses. The result is the experience of a genuine interaction.

In addition to using these techniques to explore the neural correlates of gaze-based interactions, my research is also concerned with the critical issues surrounding social neuroscience methods, and in determining the optimal protocol for measuring the interactive phenomena of social cognition.

Typically, I have participants interact with a virtual character on a screen to complete cooperative tasks. Participants believe that the virtual character is controlled by another human via live infrared eye-tracking, and that they are controlling an avatar that their partner can see in the same way.

In reality, the virtual character's gaze behaviour is controlled by a gaze-contingent algorithm that uses the online recordings of the participant's eye movements to program contingent responses. The result is the experience of a genuine interaction.

In addition to using these techniques to explore the neural correlates of gaze-based interactions, my research is also concerned with the critical issues surrounding social neuroscience methods, and in determining the optimal protocol for measuring the interactive phenomena of social cognition.

More recently we have been using immersive virtual reality methods to explore how multiple non-verbal gestures (e.g., eye gaze, head movements and hand movements) are displayed and perceived at the same time to support co-ordination during complex and dynamic collaborative interactions. These methods have allowed us to examine genuine dyadic social interactions with increasing validity without compromising experimental control or objectivity in our measures of attention and behaviour.

Which brain regions support our ability to achieve joint attention?

|

Joint attention refers to our ability to coordinate our attention with another person and an object or event in our environment. We can do this either by initiating joint attention, or responding to another person's bid for joint attention. The end product is two people, attending to the same thing.

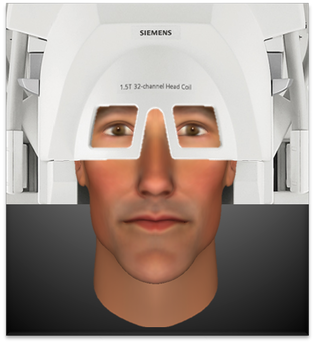

Whilst joint attention can be facilitated by language and pointing gestures, it can also be exclusively achieved using eye gaze. In fact, joint attention first develops in the form of non-verbal gaze exchanges. In a functional magnetic resonance imaging (fMRI) study, we discovered that initiating and responding to joint attention bids are supported by common brain regions in the frontal, temporal and parietal lobes. Although there were also differences in the observed activation, this suggests that these processes, although very different, involve common computations. Interestingly, the areas identified in this network that were common to initiating and responding to joint attention have also been previously implicated in tasks that involve representing the perspective of another person in relation to one's own perspective. You can read more about the study, and our novel interactive paradigm here. |

When does the brain determine the social significance of a gaze shift?

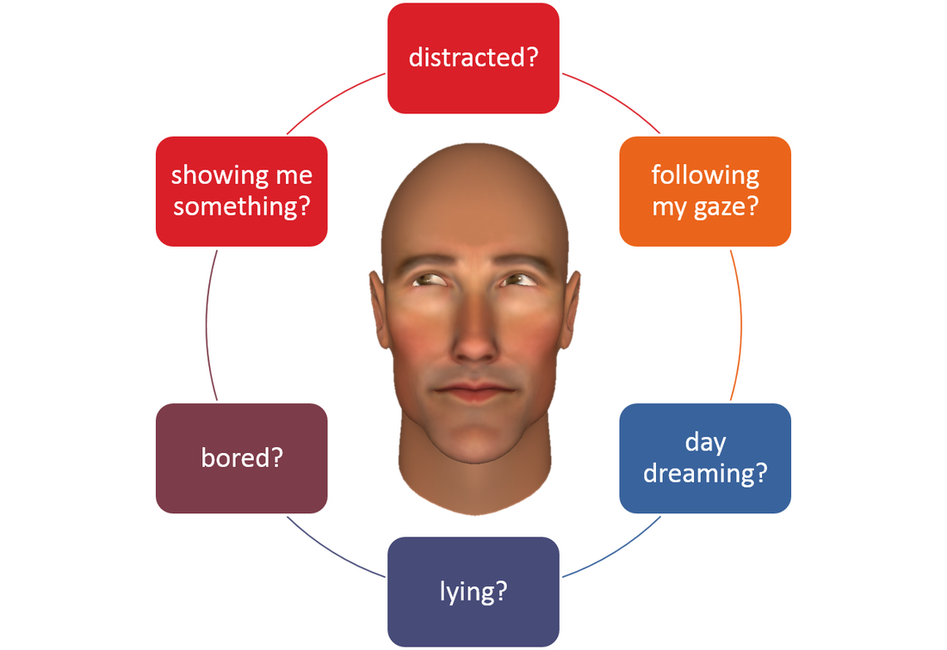

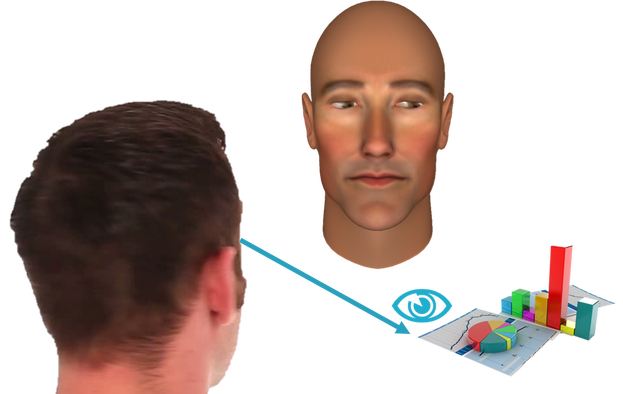

Eye gaze provides us with important information about what others are thinking and feeling. We constantly evaluate gaze information to understand the perspective of others, and how that might be important to us. For instance, if you are talking to someone, and they shift their gaze away from you, what could the significance of that be? They may be...

We can usually disambiguate the significance of a gaze shift by evaluating it in its context. For instance, if I was talking to someone about my research, and they looked at the data that I was looking at, then I would interpret that gaze shift as,

"my friend is responding to my bid for joint attention, and may even be interested in my data!"

- distracted by something interesting happening behind you

- intentionally trying to guide your attention somewhere

- deep in thought or daydreaming

- bored of you

- avoiding eye contact because they are embarrassed or lying

We can usually disambiguate the significance of a gaze shift by evaluating it in its context. For instance, if I was talking to someone about my research, and they looked at the data that I was looking at, then I would interpret that gaze shift as,

"my friend is responding to my bid for joint attention, and may even be interested in my data!"

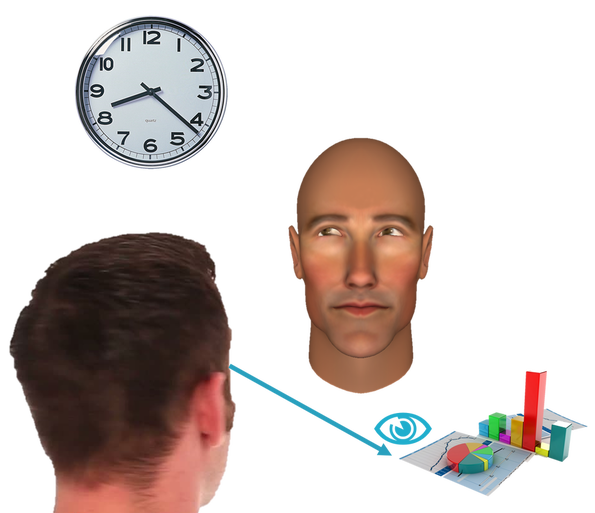

If on the other hand they were looking at the clock, you might think,

"my friend is bored, and is not interested in my data!"

|

In a series of event related potential (ERP) studies, we have also investigated the time course of brain activity when people make these evaluations during gaze-based interactions. This has delivered new information about when the brain discriminates shifts in gaze that are perceptually identical, but signal different social outcomes.

We are also interested in the role of attention and perceived agency on these neural responses. For instance, across several studies, we have shown that the brain discriminates these gaze shifts differently (i.e., more sensitively) if the observer believes they are interacting with a virtual agent controlled by another human, rather than an artificial (i.e., computer controlled) agent. |